AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

SDI enables a data store for powering analytics, machine learning and real-time applications for improving customer experience, fraud detection and more. Instead of integrating snapshots of data extracted from sources at a given time, SDI integrates data constantly as it becomes available. Stream Data Integration (SDI) is just what it sounds like-it continuously consumes data streams in real time, transforms them, and loads them to a target system for analysis.While data virtualization can be used alongside ETL, it is increasingly seen as an alternative to ETL and to other physical data integration methods. Data virtualization uses a software abstraction layer to create a unified, integrated, fully usable view of data-without physically copying, transforming or loading the source data to a target system. Data virtualization functionality enables an organization to create virtual data warehouses, data lakes and data marts from the same source data for data storage without the expense and complexity of building and managing separate platforms for each.In fact, it is most often used to create backups for disaster recovery. Data replication is often listed as a data integration method. Data replication copies changes in data sources in real time or in batches to a central database.CDC can be used to reduce the resources required during the ETL “extract” step it can also be used independently to move data that has been transformed into a data lake or other repository in real time. Change Data Capture (CDC) identifies and captures only the source data that has changed and moves that data to the target system.

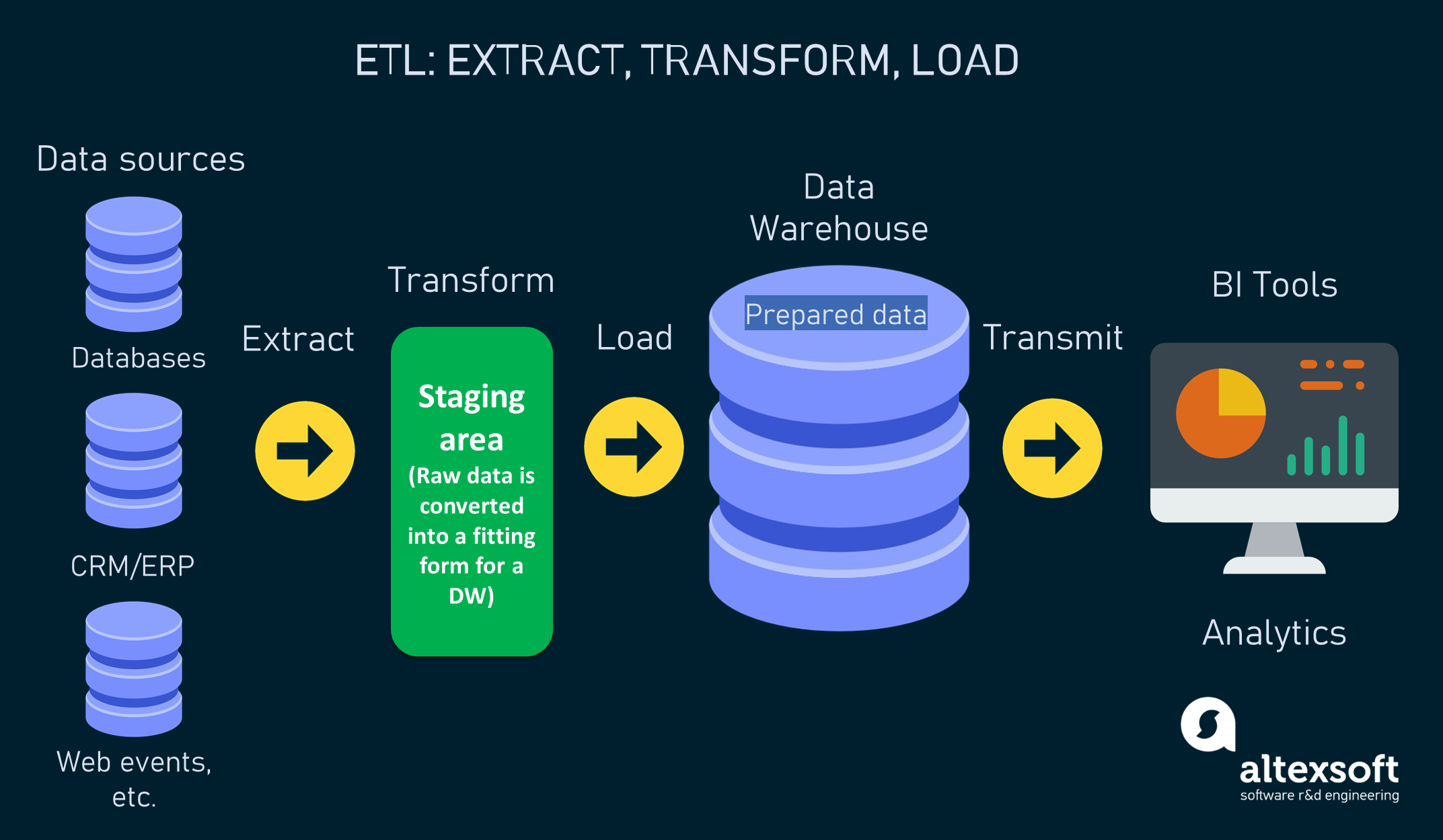

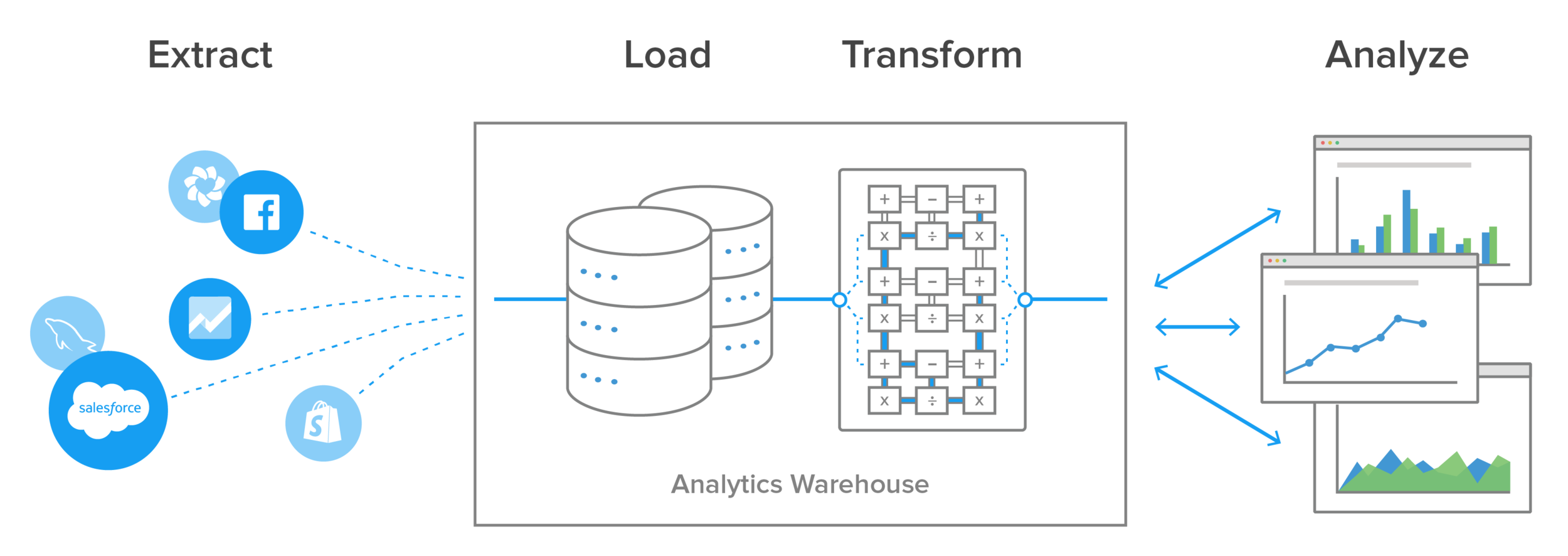

Typically, ETL takes place during off-hours when traffic on the source systems and the data warehouse is at its lowest.ĮTL and ELT are just two data integration methods, and there are other approaches that are also used to facilitate data integration workflows. For most organizations that use ETL, the process is automated, well-defined, continuous and batch-driven. Typically, this involves an initial loading of all data, followed by periodic loading of incremental data changes and, less often, full refreshes to erase and replace data in the warehouse. In this last step, the transformed data is moved from the staging area into a target data warehouse.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed